PubTech Radar Scan: Issue 41

Usual mix of academic publishing tech news, launches, AI developments, and longer reads.

Here’s my latest roundup of what’s been happening in academic publishing tech, from promising new tools to the latest AI controversies. If you have a couple of minutes, please fill in my AI usage survey. Which models are you using? How often? What’s delivering value? Takes under 5 minutes. Contributors get early access to results before I share them more widely in March.

🆕 News:

Kudos is inviting publishers to join/sponsor its “Taming the Crocodile” study, which examines the growing impact of zero-click search and AI summaries. The research aims to help publishers protect usage, revenues, and research integrity in an increasingly click-free world.

Rob Johnson reports that Elsevier is shutting down Researchfish (the platform tracking research outcomes for UKRI, NIHR, and 90+ other funders). I’d completely forgotten about “Researchfishgate” when they responded to critics on Twitter with a vaguely threatening message: “We understand that you’re not keen on reporting on your funding through Researchfish but this seems quite harsh and inappropriate. We have shared our concerns with your funder.”

The UKRI’s Metascience Unit has announced “a new funding opportunity: a Scientometrics Sandpit. We are convening researchers and practitioners to co-create projects that develop, validate and critically assess novel scientometric indicators for use in research assessment.”

What does $200,000 buy you in the AI training data market today? Apparently, high-speed access to a pirated library of more than 60 million books, payable in crypto.

GetFTR now lets AI-powered research tools check users’ access rights in real time, using the Model Context Protocol to route them to the appropriate full text.

Retractions go mainstream. The Sunday Times [Paywall] reports that 500 papers are now being retracted per month. The article covers overwhelmed peer review, AI-generated slop, paper mills, editor bribery, and incentive systems rewarding flashy findings.

67 Bricks’ report on B2B publishing tech maturity shows AI adoption is high, but impact is low, arguing that competitive advantage will only come from connecting data, treating AI as a real transformation, and shifting from static publishing to signal-driven, workflow-embedded products.

🚀 Launches:

OpenNote has launched Papers, an AI-native academic writing suite built on Feynman, Opennote’s teaching-focused AI that helps students learn by reasoning through problems, using visuals, and adapting to their progress.

OpenAI launched Prism. Is it an Overleaf killer? I suspect not. Overleaf has tens of millions of users, so it’s unlikely they’ll all migrate to a new product unless it does something genuinely new. I see this less as a bid to replace Overleaf and more as an attempt to own the scientific workflow, or possibly the scientific process, rather than to acquire training data. [Also see discussion on LinkedIn and note on Bohrium, another AI Science solution, below]

I’m more struck by the contrast between these two videos and what they reveal about how science and writing actually happen. In the first, Kevin Weil delivers a full-on Silicon Valley, fast-talking GTM Sales pitch. Fast, confident, visionary. AI will accelerate science just as it has accelerated coding, complete with big comparisons and future visions of robotic labs. In the second video, a quiet, slow-paced demo of Crixet (the previous name for Prism), you see the reality of human work. Victor Powell, the developer, is in his kitchen, minding the baby (the really high-value work), while talking to an AI tool to handle writing a scientific paper. As a non-LaTeX person, I’ve always found it arcane and faintly archaic. I’m not convinced anyone will need to write in LaTeX in ten years’ time, but then again, many arcane systems survive far longer than anyone expects.

📈 Interesting Stats:

Including simply because the numbers caught my eye:

Between 2020 and 2025, submissions to NeurIPS increased by more than 220% from 9,467 to 21,575.

Cabells now includes over 20,000 journals in its Predatory Reports database. 😯

DOAJ on scraper bots: “The last six months of 2025 saw a 419% increase over the same period in 2024, culminating in a single day in mid-November where our traffic spiked to 968% greater than a year earlier.”

🔍 Search:

79% of almost 100 top news websites in the UK and the US are blocking at least one crawler used for AI training. Reports of SEO’s death are greatly exaggerated, though traffic to reference materials is down by 11% 📉

“Large [news] publishers who blocked AI bots from crawling their sites saw total website traffic drop by 23% — and that’s not just from removing bot visits. Human traffic declined by 14% too.” [This is a summary generated by Hacks & Hackers AI Papers Explained, their experiment in using AI to help translate the latest AI research from arXiv into plain language for journalists and technologists, original paper]

Google says it’s exploring new controls that would let websites specifically opt out of having their content used in Search’s generative AI features like AI Overviews and AI Mode, as a response to UK regulatory pressure, but details and timing are still unclear.

🤖 AI:

John Fairbanks from Silverchair, on detecting GenAI-generated content, “Last week, I watched a room full of publishing professionals run the same abstract through GPTZero, CopyLeaks, and Pangram. One tool said 100% human. Another said 100% AI. Same text.”

Darrell Gunter argues that scholarly publishing needs a neutral governance body for the AI age. I am not convinced we need this; my thoughts here.

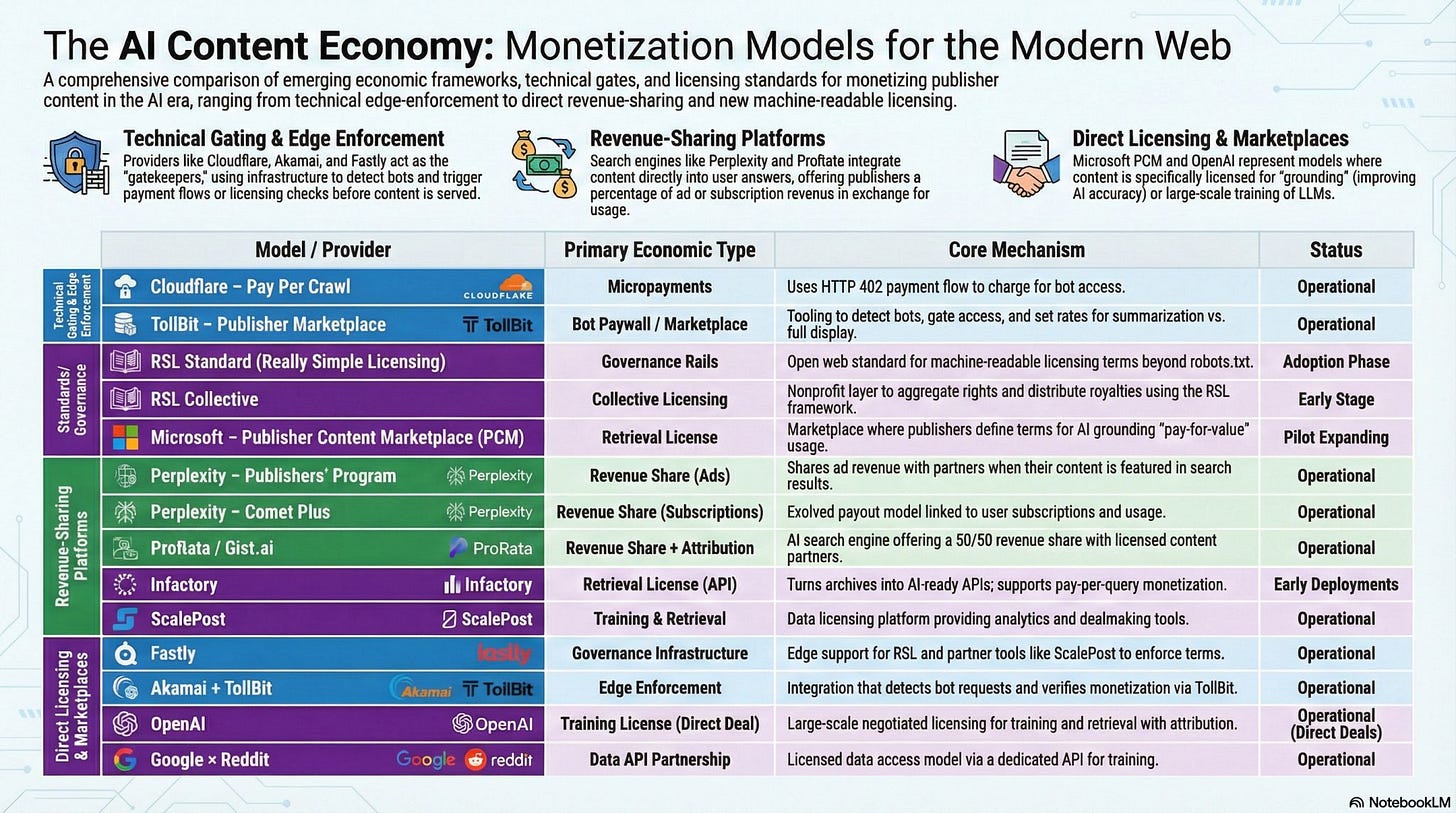

Microsoft has announced its Publisher Content Marketplace (PCM), a solution intended to give publishers a new revenue stream by providing AI systems with scaled access to premium content. Ezra Eeman captures the current landscape in this infographic:

Not mentioned in the infographic is Cashmere. Cashmere founder Jonathan Woahn has 2 articles in the Scholarly Kitchen covering why AI Isn’t Going to Pay for Content … At Least Not How You’re Hoping It Will, and The Path Forward.

Geoffrey Bilder’s Instead suggests that websites should provide AI bots with a direct link to a downloadable, static version of their content, reducing the need for repeated crawling of live pages.

Moara.io compares Claude against Elicit, Consensus, SciSpace, Undermind, and Scholar Labs. Claude came out on top, ranking higher than specialised platforms like Elicit and Consensus in an evaluation of the top 10 search results on the economic impacts of climate change. [Also see commentary by Aaron Tay & others on Bluesky]

Wikipedia has agreed licensing deals with several major artificial intelligence companies. The Wikimedia Foundation will now be compensated for access to its data, which will be used to train AI systems. TNPS on lessons for book publishers

Haseeb Md. Irfanullah argues that 100% AI-Reviewed Preprints are the Future of Open Research. I agree that preprints are increasingly accepted and unavoidable in some fields, and that human-run journals and peer review have problems, but I don’t agree that the current system is inherently unjust. I agree that AI-based review can plausibly operate at scale, but is this desirable? Journal publishing, and preprints to a lesser extent, is relatively decentralised, with evaluation distributed across multiple communities and institutions. Large-scale AI review would tend toward centralised criteria, infrastructure, and decision logic, creating risks of widespread error and reduced transparency. Replacing human peer review would relocate decision-making power to AI systems, raising questions about who would own, govern, and be accountable for these systems. [See also comments from William Gunn about peer review at the bottom of this article on AI Disclosures]

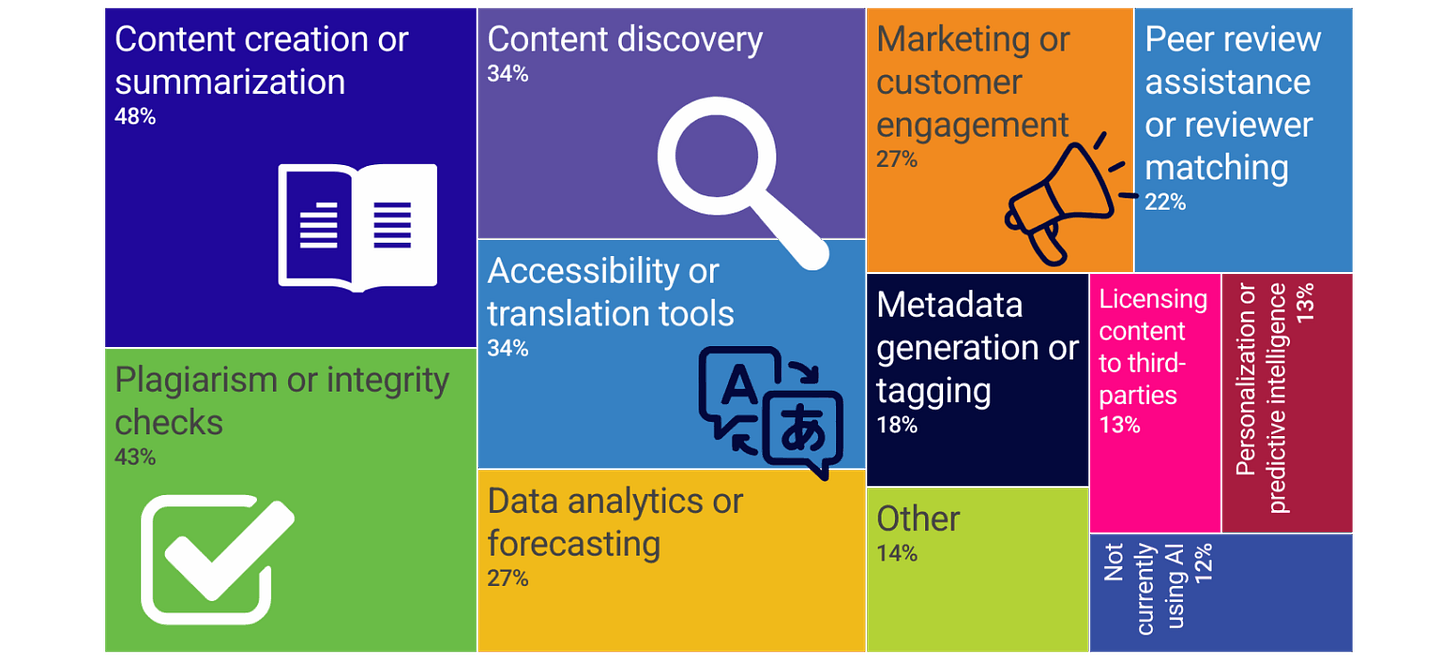

SSP’s first Pulse Check poll covers “AI in Scholarly Publishing”. Conclusion: “The prevailing mindset is neither resistance nor blind enthusiasm, but cautious experimentation shaped by deep concern for scholarly values”:

A personalisation experiment from Amy Webb that really does deserve a moment of your time. “We are on the cusp of aggressive algorithmic personalization, and I think it’s important that we stop and ask how and why decisions are being made. To me, this feels like an early signal of a larger shift: audience-aware intelligence that adapts framing as much as facts. That has implications for leadership communication, media, and trust -- especially as these systems move from assistance to influence at scale.”

Bohrium is one of many companies building infrastructure for agentic science at Scale [LinkedIn note | Paper].

“Bohrium—a platform that turns scientific assets—papers, code, instruments—into things agents can call, observe, and learn from. Think of it as Unix for experiments. It understands not just files, but the semantics of data; it supports closed loops between models, simulations, and lab benches; it lets different disciplines share a common workspace.

Above this sits SciMaster—an orchestrator that coordinates long-horizon research across reading, computing, and wet-lab work. SciMaster doesn’t replace scientists. It provides the scaffolding within which scientific work can be executed, inspected, validated, and improved.”

📚 Longer Reads

🎥 This short docu-film sets the stage for Frontiers Science House at Davos. It tells a compelling story about openness, framed from the perspective of a publisher that materially benefits from how openness is currently implemented. Note, the shift in focus to Fair Squared, a data-management platform positioned as making scientific data “certified” and “citable,” promising researchers a new way to build careers while enabling data aggregation in the name of faster problem-solving.

Anil Dash on How Markdown took over the world.

The Age of Academic Slop is Upon Us - by Seva Gunitsky includes the wonderful made-up definition: “Automatenwissenschaft (n): A flood of AI-generated, technically adequate papers that nobody reads.”

📄 Another lovely term, PISS: “One person PISSing in the pool of science? Negligible. Tens of thousands? The water takes on a different hue...” Hanson et al. analysed over 100,000 special issues and found that 1 in 7 guest editors publish in their own issues — a practice they dub PISS (Published In Support of Self). The result? Up to 43,000 excess articles.

🎧 Michael Nakan CEO of Comixit! is using AI to transform the legendary Beano into “frictionless, digital-native webtoons”

Jane Hammons in “Outdated Notion? Teaching Plagiarism as Theft,” challenges the metaphor of plagiarism as “theft”, arguing that this framing is legally inaccurate, culturally narrow, and pedagogically harmful and proposes a shift toward more supportive language that treats citation as an academic practice rooted in respect and collaboration, rather than criminality.

📄 A study of ~280,000 academic YouTube transcripts found that the frequency of certain words, such as delve and adept, shows a statistically significant upward trend starting after the release of ChatGPT in November 2022, with estimated increases of roughly 35%–51% over the ~18 months of post-release data.

🎧 Andrew Smeall: using technology to effect business change | Thinking about Digital Publishing

Steve Smith argues that the traditional PDF is becoming obsolete as the core scholarly unit of record because it obscures machine-readable structure and context, and that publishing needs to shift toward “Knowledge Objects” that encode claims, context, and provenance explicitly for AI to reason reliably with research information. But how do we actually get from today’s messy, incentive-driven publishing workflows to interoperable, trustworthy “knowledge objects” at scale?

Rick Anderson revisits his 2014 “quiet culture war” thesis to argue that divisions within academic libraries over institutional service versus global open access have intensified. He argues that the open access movement has grown more ideological, with little room for dissent, and that professional pressures stifle honest debate. (I have a feeling this article might resonate more with publishers than librarians. For all the good that OA provides, I think many publishers look back with a sense of wistfulness about the world that was.)

Mark Hahnel argues that the next phase of open data must focus on quality, incentives, and stewardship, supported by emerging technologies, if it is to deliver meaningful value to research and society.

Azeem Azhar and Hannah Petrovic on How “95%” [of organizations are getting 0 return from AI] escaped into the world – and why so many believed it. The tale of where this stat came from is the interesting part of the read.

Anita Coleman’s Infophilia post explores how library AI voices show up on Substack, examining how information behaviours and platform dynamics shape visibility in AI literacy discourse.

And finally…

Andrew Maynard asks, Can modern scholarship escape AI? in a new (sadly unpublished) paper and provides a succinct answer:

End Notes

If you found this useful, you can always buy me a coffee.

If you need consulting help navigating any of this, find me at Maverick.

Looking for my AI-powered use cases for Academic Publishing? Find the list here

Couple of item that didn't quite make it in due to space constraints:

🎧 In this FQxI podcast episode (3 October 2025), Reporter Geoff Marsh looks at experiments run by Critical Care Medicine and Biology Open, alongside the platform Research Hub, to see what changes when reviewers are financially compensated. In parallel, Research Hub has also tested using AI reviewers in a hybrid human-machine workflow. https://qspace.fqxi.org/podcasts/125/2025.10.03

News Corp is working with Symbolic.ai to deploy a new AI-native platform tailored to the needs of journalists, PR types, and corporate comms teams. The platform is designed to automate time-consuming tasks such as transcription, document extraction, newsletter creation, fact-checking, headline optimisation, and SEO. [H/T: HANA News] https://symbolic.ai/

In December alone, one million channels used YouTube’s AI creation tools daily. More than 20 million users learned more about the content they watched through the new AI-powered Ask tool. https://www.linkedin.com/pulse/152-media-operating-system-ezra-eeman-v2vpe/

In this reflective post, Lily Ray (SEO expert) argues that whilst tools like ChatGPT have reshaped how users search and reduced traditional click-throughs, large language models are still heavily dependent on traditional search infrastructure and established signals of authority. SEO, digital PR, and brand authority remain the foundation of visibility in both traditional and AI-driven search. https://lilyraynyc.substack.com/p/a-reflection-on-seo-and-ai-search