PubTech Radar Scan: Issue 40

January 2026 roundup of publishing tech launches, news, AI developments, and longer reads, somewhat haphazardly collected and curated.

Welcome to 2026 and welcome to new subscribers, especially those from Journalology. Monthly roundup time - here’s what’s been happening in academic publishing tech, from promising new tools to the latest AI controversies. Next issue, I’ll be digging into the bigger trends that everyone’s been writing about.

🆕 News

📅 The Research Data Alliance is relaunching its Research Data Policy Interest Group as a more global forum for discussing research data policies. More information and a registration link for the webinar on January 20, 2026 11.00 GMT / 12.00 CET.

💰Sage Publishing has sold Lean Library, a digital tool aiding access to library services, to its management team, including longtime director Daniel Horvath and tech lead Nenad Vidic.

🤖 What’s more of a problem for scholarly publishing - bad bots or bad humans? Results from the STM Research Integrity Day panel.

📊 More than half of researchers now use AI in peer review workflows, according to new data from Frontiers

🔍Crossref explains how to spot a fake DOI, for example, DOIs cannot begin with anything other than 10, cannot have three-digit prefixes, cannot start with 0, and always start with a 10 followed by a period.

🗳️Deanta’s excellent Trends in Academic Publishing, 2026 is up and running.

📈The OA Switchboard’s 2025 report shows it sent over 2.3 million messages between 42,000+ connected organisations, including 42 publishers, 21 consortia, and 280 institutions and funders. (The OA Switchboard is a neutral, non-profit platform for sharing open access publication metadata, founded in October 2020.)

🚀 Launches

CABI has launched SDGenie (beta), a prototype tool that matches research abstracts to specific SDG targets, helping researchers show how their work supports global goals. It’s free to use. Give it a go and share your feedback via the short survey. [Late to this - it launched in November]

WyderNet is a new AI-powered platform that embeds librarian expertise into students’ digital workflows by interpreting course materials in context. It maps assignments and syllabi to authoritative library resources, ensuring credibility wins where convenience once ruled.

Andrew Ng has launched Agentic Reviewer to give feedback on research papers (works best on AI papers). In testing, its comments aligned with human reviewers slightly better than two humans did with each other (Spearman 0.42 vs 0.41), but critics argue this reflects mimicry, not judgement, because the tool copies the tone of past reviews.

Trilogy has launched Manuscript AI, a new tool that helps book publishers, commissioning editors, and literary agents sort through unsolicited manuscripts and the slush pile. It rates submissions on sales potential, likely reader reviews, genre match, and writing style, using data from 2.7 million copyright‑cleared and public domain books. The tool covers major categories, including memoir, nonfiction, drama, romance, and science fiction, and aims to spot any hidden gems.

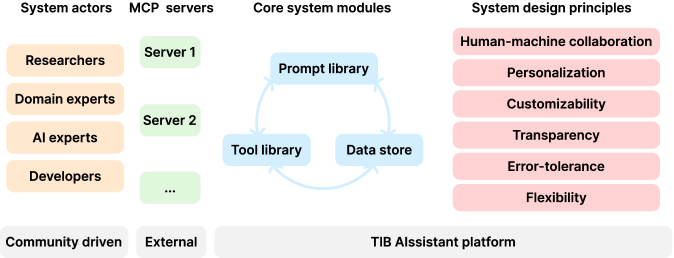

TIB AIssistant is a new open-source platform built using Next.js and GPT-4o mini, that stitches together a library of AI assistants for every stage of the research process. It integrates external scholarly tools like ORCID, Crossref, and Semantic Scholar, and lets users export machine-readable provenance data with RO-Crate. [Article/ArXiv preprint]

🤖AI

📅 Impelsys is hosting a panel discussion on Publishers’ Opportunity or Dilemma? Licensing Content for AI Training February 05, 2026 10:00 am EST | 4:00 pm CET

Kent Anderson asks, Can you retract an article from an LLM? Kent Anderson explores NEJM’s AI Companion and finds that it misrepresents scientific findings, confuses authorship, and fails to flag retracted papers. Kent argues that opaque LLM technology, poorly understood even by the publishers adopting it, is not fit for purpose and risks eroding trust in the scientific record. See also David Worlock’s response and my response.

🔍 Aaron Tay’s exploration of a very modern user crisis: what are we actually meant to type into AI search boxes?

Peter Schoppert summarises Roy Kaufman’s argument on whether training LLMs on open-access content is legally sound, particularly when attribution is removed. He also revisits Google’s 2018 Talk to Books experiment, an early example of using an LLM as a conversational search tool that predates ChatGPT by several years.

Dr Stylianos explores the flawed promise of AI writing detectors, which rely on statistical patterns like “perplexity” (how predictable or uniform the word choices are) and “burstiness” (variation in sentence length and structure) to flag machine-generated text. He argues that as models evolve, their ability to mimic human nuance increases, which makes AI detection tools less reliable and reduces their value. [I use Pangram’s service fairly regularly and like it, but I’m aware that when I submit entirely human-written work about AI, it’s often flagged as 100 percent AI, with many common terms marked as suspicious.]

UKRI is testing whether generative AI can predict reviewer scores on 1,000–2,000 past grant proposals, without seeing the outcomes. Led by Mike Thelwall, the project explores if AI could support peer review by easing admin, breaking ties, or acting as an additional reviewer.

The FT argues that tools like Claude Code are reshaping academic research by automating once-specialised tasks. While this boosts reproducibility and lowers production costs, it may encourage more marginal research activity with greater output but not necessarily greater insight. As technical barriers fall, will intellectual originality become the key differentiator?

Mark Hahnel argues that research data should prioritise machines over humans, enabling AI systems to accelerate discovery. That means ditching PDFs and vague metadata in favour of structured, queryable, computational assets.

Springer Nature is pulling nearly 40 papers after researchers used a flawed dataset of children’s images from autism-related websites without proper consent. [There are a lot of dodgy datasets out there (e.g., DukeMTMC4ReID, 80 Million Tiny Images, Brainwash, etc), and publishers probably need to get better at identifying research that has used these.]

📚 Longer reads

Tim O’Reilly’s AI and the Next Economy is a clear-eyed look at the economic risks of AI, framed through a Blakean warning. “We may be building the engines of extraordinary productivity,” he writes, “but we are not yet building the social machinery that will make that productivity broadly usable and broadly beneficial. We are just hoping that they somehow evolve.”

This Euractiv article tackles how AI is rapidly transforming journalism, faster than most institutions or the public realise, and why trust is becoming the central issue, not just disinformation.

A new preprint by Zhao & Berman finds that LLMs are already disrupting the online news ecosystem. Since August 2024, news publishers have seen a 13% drop in traffic, likely due to AI tools summarising content without linking back. Sites that blocked AI bots like GPTBot saw even steeper drops, including a 14% drop in human visits and a 23% decline in total traffic. Attempts to block AI crawlers appear to reduce both total and human traffic further.

Ask A Librarian offers a critique of how generative AI reshapes student research by replacing the exploratory process of scholarly search with answers that feel perfect but may be fabricated. It draws on real librarian experience and Gen Z search habits to argue that learning happens not through instant results but through questioning, frustration, and discovery.

Professor and economist Joshua Gans spent 2025 “vibe researching” (using large language models to quickly draft, revise, and submit academic papers). While the process boosted output, he found it also encouraged bloat, pushed weaker ideas too far, and made it easy to mistake fluency for substance, with confident-sounding but shaky results often slipping through until peer review.

In The Lineages and Inheritances of Shadow Libraries and their Documentation, Martin Paul Eve considers the fractured legacy of shadow libraries after the takedown of Library Genesis, focusing on how successor projects like Anna’s Archive try to stitch together and tidy up metadata from scattered sources.

Rosalyn Metz questions the uncritical worship of “openness” in library and digital infrastructure communities and suggests we rethink openness not as a product to give away, but as a shared responsibility requiring consent, stewardship, and structural care.

Eleonora Dagiene describes how efforts to fix a broken publishing system in Lithuania ended up weakening it further. Reform policies aimed at raising standards instead undermined local journals, leaving researchers with little choice but to rely on commercial platforms. What started as a response to poor practices became a deeper loss of trust and control.

Kevin Baker’s “Context Widows” essay argues that questions about whether LLMs can “do science” miss the point; what matters is how these tools are integrated into an institutional landscape dominated by citation metrics and other evaluative measures that have long distorted scholarly priorities. Rather than challenging this system, large language models function as amplifiers of existing incentives, rewarding volume and form over substantive contribution.

🎥 Oxford academic Professor Patricia Kingori explores Kenya’s shady, multi-billion-dollar fake-essay industry, where educated Kenyans secretly write papers for students around the world. The Shadow Scholars is ponderous and slow, but tackles a complex issue.

And finally:

🤦♀️ In a new low for academic integrity, Springer Nature’s £125 AI ethics book is riddled with fake citations, including references to journals that do not exist. The publisher says it is “investigating”.

End Notes:

💷 If you found this useful, you can always buy me a coffee.

🔗Looking for my AI-powered use cases for Academic Publishing? Find the list here.

🤖If you’re interested in AI, you can subscribe for free to my other Substack, GenAI for Curious People, to see entries for my new book.

🆘If you need consulting help navigating publishing technology or AI, find me at Maverick.