PubTech Radar Scan: Issue 38

AI-focused issue while I dig out from under a mountain of saved links and half-written entries.

Welcome new subscribers! This newsletter covers publishing technology with my usual idiosyncratic take - entries reflect what caught my eye, nothing’s sponsored. This issue is 100% AI-focused to tackle a backlog after a bit of a delay in publishing. Next issue returns to the usual mix of launches, news, and analysis. It shouldn’t be quite so long either.

🆕 News/New to me

Holtzbrinck opens AI Hub in San Francisco to bridge its European publishing empire with Silicon Valley’s AI ecosystem. The German media giant will test AI innovations in science, education, and media while scouting investment opportunities

Russell Michalak at Goldey-Beacom College Library shares a nice story about how JSTOR’s Seeklight helped students describe 172 historical photographs in 4 hours rather than 250 per year. The change, from authoring every word to reviewing and governing at scale, hints at how AI is going to change the work many of us do.

Meet the industry’s top innovators and trailblazers as they present their latest breakthroughs at STM Innovator Fair 2025 on December 9, in London. This year’s theme is “Building Tomorrow’s Research Integrity Framework”.

🚀 Launches/newish products

Scopus AI launches Deep Research, an agentic AI feature that performs comprehensive literature reviews automatically. When users ask research questions, the system develops detailed research plans, conducts dozens of searches across Scopus literature, reviews findings, and refines its strategy as insights emerge. The tool produces downloadable reports that Adrian Raudaschl (Principal Product Manager at Elsevier) says can “save days of preliminary research.”

Refine is a research-focused AI tool that aims to offer deep, substantive feedback for academic and technical authors. Unlike grammar tools or compliance checkers, and distinct from broader peer-review simulators like Reviewer3 or ReviewerZero, Refine targets the logic, internal structure, and consistency of a manuscript. (H/T: Aaron Tay)

Nicia.ai has launched an AI assistant for peer review. They claim 95% time savings and position it as “agentic AI” that can make autonomous decisions within defined parameters, continuously learning from each review while always deferring to human judgment on matters of scientific merit and impact.

Paper2Agent converts computational research papers into chat agents you can interrogate with questions like “apply your method to my data”. Only works with well-structured, public code repositories, takes 30 minutes to 3+ hours per paper, and costs ~$15 per conversion. Successfully demonstrated on three bioinformatics papers, reproducing original results and answering new scientific queries. (This is an early proof of concept, but an interesting direction) [GitHub | Medium article | Preprint | Comment]

Daniel E. Acuña’s team developed an AI classifier that flagged over 1,000 questionable journals from 15,191 open-access titles, achieving 76% precision while generating 345 false positives. The system, described in Science Advances, analyzes website design and bibliometric features using DOAJ criteria. ReviewerZero, Acuña’s startup, plans to commercialize the tool as ‘Journal Monitor’, part of a suite targeting research integrity specialists.

Wiley AI Gateway has launched. More on this in a future issue when I’ve had a bit more time to explore.

👎Problematic AI

AI chatbots are citing retracted scientific papers without warning users, according to MIT Technology Review. Researchers found ChatGPT-4o referenced retracted papers in five of 21 test cases about medical imaging, advising caution in only three instances. A separate study showed ChatGPT-4o mini evaluated 217 retracted papers without mentioning retractions or quality concerns in the responses.

Iris van Rooij’s “AI slop and the destruction of knowledge“ looks at AI-generated misinformation about cognitive science concepts on ScienceDirect.

Kent Anderson describes how he created a fake 15,000-word research paper in 20 minutes using Science42: DORA from insilico.com. His demo study on “music and mental health” contained detailed methodology, SPSS analysis, and 119 references, all from a three-word prompt to show how easy it is to create a fake paper. (The same technologies that accelerate legitimate research are, of course, going to be used to flood journals with sophisticated fraudulent papers).

🎥 Dr. Kimberly Becker’s YouTube short “The Dark Side of AI Research Tools“ examines how AI can misleadingly amplify scientific evidence through decontextualized citation stacking, creating a “compound interest” effect where misrepresented studies get incorrectly built upon.

🤖 AI and Peer Review

🚨New acronym alert: ASPR (Automated Scholarly Paper Review) from a paper examining AI-powered peer review.

Research output has increased 212% since 2004, but reviewer capacity hasn’t kept pace. At the September AI Publishing Collective meetup, Chris Leonard, Wiley’s Sam Parker and Rebecca Windless, and Digital Science’s Leslie McIntosh explored how AI might address this challenge. See also Laura Harvey’s excellent write-ups here and here.

If you need some brightness in your life, check out Oleg Ruchayskiy’s LinkedIn post on how academic publishing will die when LLM-based peer review takes over 🙂.

Roohi Ghosh interviews Christopher Leonard and me in The Scholarly Kitchen on “Peer Review in Transition”, exploring how AI will reshape peer review.

The AI Peer project is assessing whether LLMs can score academic journal articles for research quality. Initial findings show LLM scores align positively with expert judgements across almost all fields, with stronger correlation than citation rates achieve. However, the researchers caution that LLMs are “guessing” at scores rather than truly evaluating, likely by matching abstract claims to quality definitions - evidenced by similar results whether using full texts or just titles and abstracts. [LinkedIn post about initial findings]

🔮 Future thinking

Knowledge as a commodity: If scientific publishing is shifting from being the endpoint of research to just one step in a much bigger process, then the entire role of the publisher is fundamentally at stake, according to David Worlock. Worlock asks as scholarly communications migrate from content to facilitation, which organizations will researchers trust to support their work? (I wouldn’t bet on publishers winning an arms race against big tech players. I might bet on a society brand beating a big tech player for niche but essential tools tailored to their specific community.)

Jon Treadway’s post on AI-first publishing is excellent, and I agree with much of what he’s written, but I think what AI-first research looks like is more interesting than AI-first publishing. In my quickly put together vision, researchers use AI tools to explore literature, build personal RAG (or whatever comes next) models, let AI handle study design and paperwork, deploy voice agents for interviews, have AI analyze responses, generate papers in their style, and automatically produce reports. This is technically possible today - the key question is who controls the underlying technology, see David Worlock’s article above.

Phil Jones offers a measured three-year retrospective on ChatGPT’s impact on scholarly publishing, tracking the steady evolution from early hype to today’s practical applications in writing tools, discovery platforms, and research integrity.

📚 Longer reads/listens

📰 Lee Konstantinou in The Chronicle of Higher Education describes peer-reviewing a humanities paper where every single quote from the novel being analyzed was completely fabricated. While fraud discussions usually focus on the sciences, AI-generated fakery is hitting literary scholarship too. His account captures the messy human reality of being the reviewer who has to check every citation and wrestle with sympathy for what’s likely a desperate contingent academic.

🎥 Wiley launches “Chats” video series on AI in academic publishing, featuring BMJ’s CTO Ian Mulvany and Wiley’s Ray Abruzzi navigating practical AI challenges for publishers and researchers. The conversation covers prompt engineering tips, publisher partnerships, and AI hallucination misconceptions.

🎧 O’Reilly’s “Generative AI in the Real World” features Ben Lorica interviewing Faye Zhang on how foundation models are transforming recommendation systems. Zhang discusses the shift from traditional next-click prediction to models that can forecast user behavior over 30-day windows, moving beyond simple content matching to understand long-term preferences. The conversation covers how companies like Pinterest are leveraging these advances in their recommendation engines

🎥 Emily Bender, one of AI’s most prominent critics, argues “We do not have to accept AI (much less AGI) as inevitable in education“ in a recent UNESCO talk.

📰 Mark Piesing explores how AI writing tools are subtly changing authorship in The Bookseller. While some writers embrace AI assistance, others strongly resist, highlighting the concern that AI assistants designed to help writers may actually be training writers to conform to algorithmic preferences rather than developing their own voice.

“I’ve never knowingly used any AI tools while researching my books,” says Alex Larman (Spectator books editor), “because I am arrogant – or experienced – enough to believe that I will always have a better connection with my subject than an algorithm can. I have no doubt that AI will change writers’ lives beyond recognition, but am unable to see this as a good thing; instead, I fear that it will steal the soul from this most human of professions.”

📰 Tomasz Tunguz explores “PageRank in the Age of AI”, arguing that content distribution will soon mirror online advertising architecture. Instead of advertisers bidding to show ads, publishers will compete in real-time auctions to have their content included in AI responses. AI systems would evaluate submissions based on quality metrics - relevance, accuracy, freshness, and authority - rather than payment, creating “PageRank for real-time AI responses” operating in milliseconds.

📰 Michael Upshall asks, “Have LLMs overtaken humans for knowledge organization?“ following the ISKO-UK conference. Most speakers emphasized AI limitations, except Tony Russell-Rose’s presentation on 2dsearch, which demonstrated how GenAI can automatically handle complex term selection with better results than manually curated resources.

And finally…

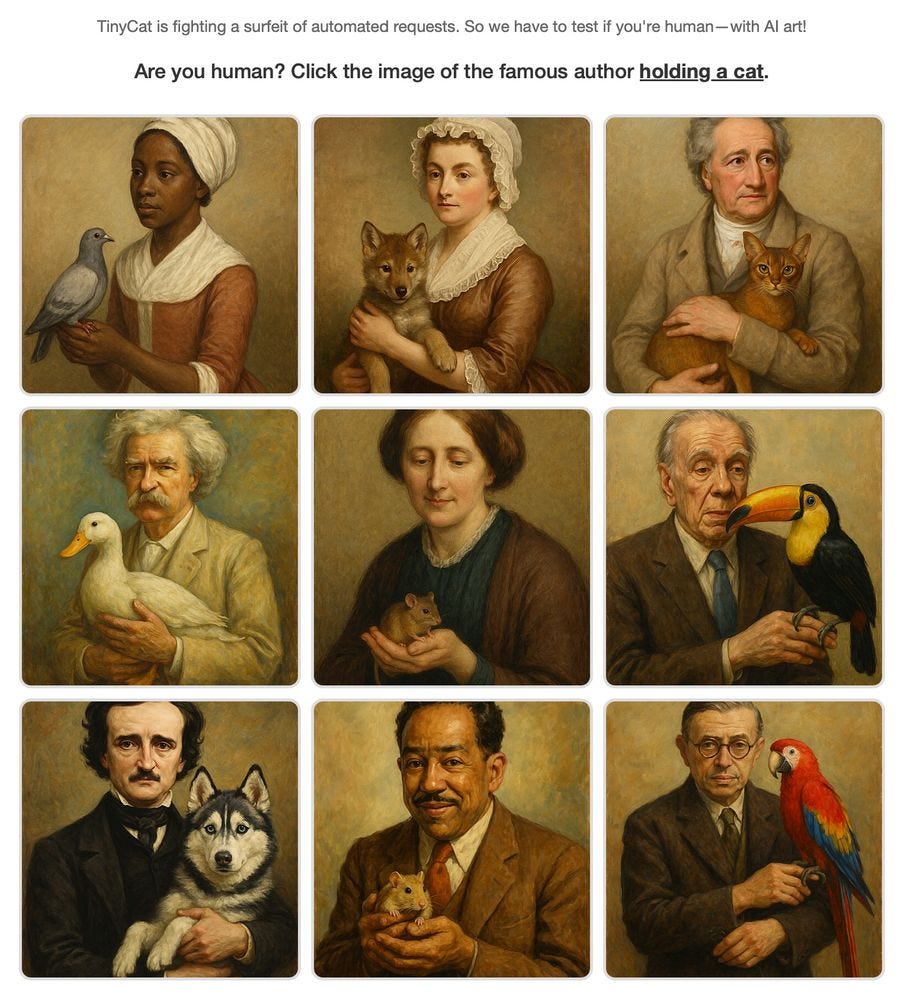

I adore this, LibraryThing’s @librarycat.org is battling AI scrapers, so they built a cat- and literary-themed captcha.

End Notes:

If you found this useful, you can always buy me a coffee.

If you’re interested in AI, you can subscribe for free to my other Substack, GenAI for Curious People, to see entries for my new book.

If you would like to employ me, you can find me at Maverick.

Looking for my AI-powered use cases for Academic Publishing? Find the list here.