PubTech Radar Scan: Issue 13

Can’t quite believe it’s been six months since I last put together a newsletter, time has flown! Here’s the August edition:

Publishing platforms

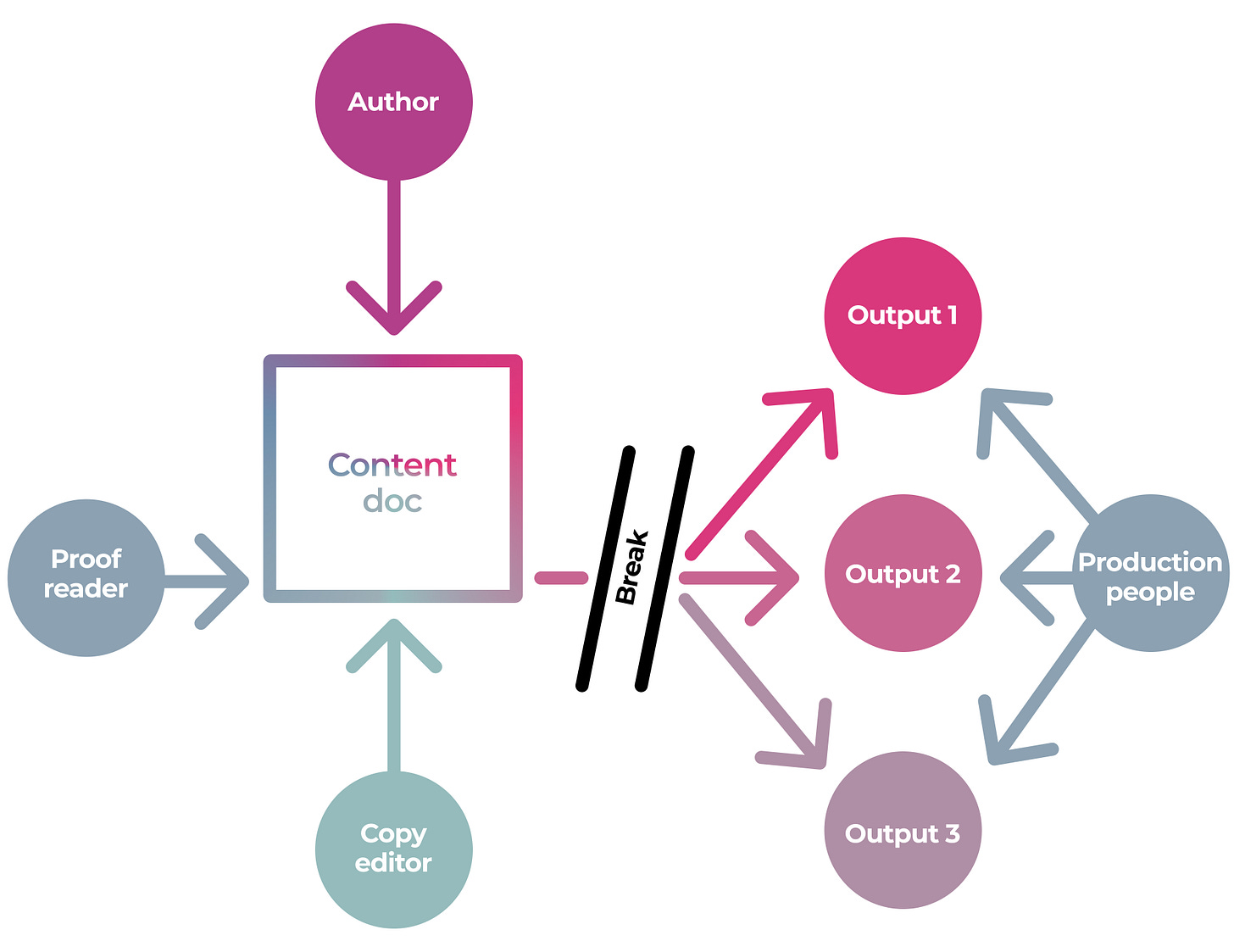

Adam Hyde has a series on the disconnect between the content creation processes and production processes, why these are tricky problems to solve, and, of course, how Coko’s ecosystem of tools fix this.

Octopus has just received £650,000 of funding from the UKRI. From a technology perspective, I hope Octopus manages to reuse some of Coko’s, or OSF’s, or PubPub’s or another platform’s open-source code so there’s a little less inadvertent The People’s Front of Judea stuff going on. From a product perspective, I think the publishing platform aspect of Octopus will be a tough sell (authors have a lot of other options) but there are some interesting ideas here. Part of what Octopus is trying to do reminds me of Euan Adie’s thinking around Altmetric.com where he wanted to bring together all the information and comments about a research paper after publication. [I think] Octopus is looking to locate a researcher paper with previous work in the field as well as the grant proposals and other work that has happened pre-publication which could turn into something interesting.

Preprints and disinformation

As arXiv celebrates its 30th birthday and concerns about biomedical preprints rumble on within the publishing community, the next three items are worth digesting in one sitting:

Kent Anderson looks at how journals, communications officers, societies, universities are losing their news-making authority and positional leverage as preprint servers become the news sources for science.

Michele Avissar-Whiting talks to Everything Hertz about her role as the Editor-in-chief of the Research Square preprint platform and how she weighs up the benefits and costs of potentially problematic preprints during a pandemic

Joseph Bernstein has a long piece about Bad News and disinformation in Harper’s Magazine. Are we focusing too much on gatekeepers and algorithms but ignoring the social conditions that allow disinformation to thrive?

SciHub

SciHub is crowdfunding to stay afloat and develop further: “Sci-Hub engine will [be] powered by artificial intelligence. Neural Networks will read scientific texts, extract ideas and make inferences and discover new hypotheses”.

John Hubbard discusses why Sci-Hub Belongs on Your Library’s “Databases A-Z” List. My personal view is that SciHub has acted as a safety valve for paywalled content over the years and has relieved some of the pressure on publishers to switch to OA. If a researcher can quickly and easily get the articles they need via a simple workaround, they’re unlikely to get agitated enough to start campaigning for OA.

AI, automation and data

Andy Tay looks at AI-powered writing tools for Nature Index and mentions an experiment by Writefull that “involves feeding an abstract into an AI tool, which generates a paper title based on the input. This function can enhance title readability, draw readership to the abstract and make the article more visible to search engines” which could be useful.

Playing around with text paraphrasers to generate and then search for “tortured phrases” is a fascinating and disturbing way to lose yourself for a couple of hours. Take a definition for something like “Stratified random sampling is a method for sampling from a population whereby the population is divided into subgroups…”, run it through a text paraphraser to get something like “Stratified irregular testing may be a strategy for inspecting from a populace whereby the populace is separated into subgroups…” then you google anything that looks a bit tortured, such as “ Stratified irregular testing ” and see what comes up.

What happens when a dataset is deemed problematic by the machine learning community, activists, the media? Kenny Peng, Arunesh Mathur, Arvind Narayanan look at this incredibly complex issue. The answer may entail more long-term community curation and restrictive licenses.

Michael Upshall discusses How to improve Peer Review through more structured reviews. Which reminded me of a rather neat project by the ACM SIGSOFT Paper and Peer Review Quality Initiative to generate “empirical standards for research methods commonly used in software engineering” see also their documents which give examples of what reviewers should be looking out for and perhaps more interestingly, lists invalid criticisms for different types of studies. I would have thought that a platform like Octopus will need some automated ‘help’ to try to ensure the research (and comments) on the platform are ok and these kinds of standards could form the basis for automated checking.

Odds and Ends

Bianca Kramer and Cameron Neylon are running a webinar about What do we lose when MAG goes away? On Friday 3 September.

RELX has a Medium Blog (when did that happen?) with a recent post describing some of their recent innovations including their 3D anatomy app and a VR simulation platform for training nurses.

MethodSpace interview Daniela Duca about creating SAGE Texti: A free tool for cleaning and pre-processing textual data

Read more about the SAGE rejected article tracker built by Adam Day and Andy Hails

Michael Cairns’ soon to be launched Publishing Technology Software & Services Market Report has some great-looking graphics including a rather nice subway map of publishing technology companies.

ResearchRabbit has finally launched. See also Aaron Tay’s review.